MontyCloud DAY2 Automated Resource Tagging for AWS MAP

Have you signed an agreement to begin migrating to AWS? Or are you a Managed Service Provider (MSP) with an AWS Migration Competency delivering AWS...

8 min read

Jonathan

:

Jun 15, 2021 11:34:00 AM

Jonathan

:

Jun 15, 2021 11:34:00 AM

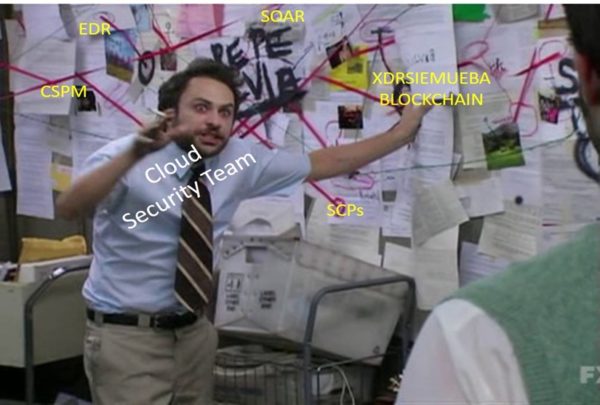

In my previous blog post, I wrote how Cloud Security Posture Management (CSPM) while very boring, is a “necessary evil” in cloud security programs. I also wrote how without a proper tool, a rollout plan or application context, it can get very noisy. We also teased looking at automated remediation to combat this deluge of alerts your CSPM tools create. With strong runbooks and contextual risk treatment, there is a way to help your beleaguered security teams. In this post, I discuss CSPM’s hyperactive cousin: Cloud Security Orchestration and Automated Response (SOAR), also known by other terms such as automated remediation or automated guardrails. Some go as far as to term it “Policy as Code” or “Compliance as Code”. As with anything in IT there is no shortage of terminology.

Simply put SOAR is a mechanism that automatically responds to a known bad configuration and changes it to a known good configuration, as close to real-time as possible.

Arguably, a well implemented CSPM tool will tell you that.

Some egregious and objectively bad (in a vacuum) issues include

to name a few.

The idea behind a SOAR platform and a reason to implement one is that in any given environment there can be thousands of misconfigurations. Hunting those theoretical thousands of issues down using instant messaging or ticketing systems is a fantastic way to slowly treat risks and demoralize security teams (P.S. Do not do that!).

Instead, why not bring in a solution that will detect these misconfigurations, ideally directly from the output of your CSPM tools, and use native APIs to automatically fix them? You are probably nodding in agreement but like anything else, and like the title suggests, there are potentially smarter ways to invest in and implement a SOAR platform.Se

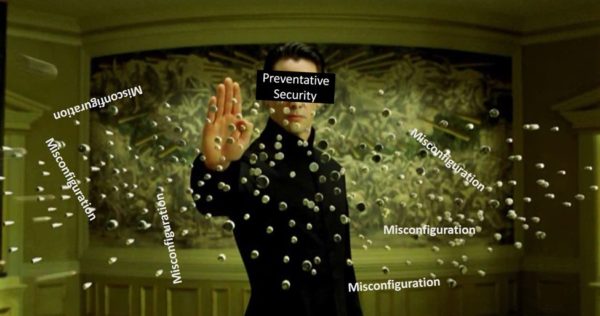

In recent years there has been much clamoring around cloud security programs besides just the utilization of CSPMs and or calling Identity “the new perimeter”. Some terms you may be familiar with are DevSecOps or the adage of “shifting left.” No doubt this is a topic for another blog in of itself. To summarize, those ideas boil down to baking security into every stage of development and operational lifecycle of an application and using a whole different set of tools to prevent these bad things from happening. The bad things usually boil down to egregious application security errors (SQL Injection, Cross Site Scripting) and bad infrastructure configurations we touched on.

Another pillar to a DevSecOps approach is to implement a culture of security ownership where teams are taught what “right looks like” and can act independently, taking full ownership of their product stack, leaving centralized security teams able to focus on more nascent threats and risks. So, to wit, the best way to stop a bad thing from happening is not letting it happen at all. It turns out the term “Prevention is better than cure” is not just grandma’s cliché. If you know of critically bad configurations, why even let someone apply it?

If you know what you are doing, and are willing to write and maintain complex scripts, this is notionally simple. For example, on AWS you can achieve this with explicit denies in an AWS Identity and Access Management (IAM) policy, attaching a Permissions Boundary to an IAM entity that explicitly denies an action, or using IAM Service Control Policies (SCPs) which will stop bad actions up to an entire AWS Organization or down to a specific Account. By using IAM Conditional Keys which look for specific request level metadata, along with any of the previously mentioned IAM components, you can stop almost anything bad from happening whether that is preventing someone snooping in your logs, creating EC2 instances without a specific “golden” AMI, or accessing a resource over the internet instead of your private network.

As usual, there is a catch, you likely have 10s of thousands of IAM policies, 100s of Roles, and thousands of Users and Groups in your environment. Most likely, they get spun up and down rapidly. Unless you have a small footprint, it may not be possible to do much beyond rolling out SCPs to your AWS footprint or applying Permissions Boundaries to centrally federated roles.

The next enemy to prevention (via IAM) is a file size limitation. As of the time of this writing 5,120 Bytes is the maximum size of a SCP. This is plenty until you have nested conditional logic or are trying to prevent everything under the sun.

Start with creating a list of critical misconfigurations or actions you never want to happen such as disabling security tools (i.e., Security Hub, Macie, IAM Access Analyzer), tampering with network or identity configurations (especially in shared/hybrid environments) or enforcing only specific AWS Regions or Services to be used. You get the point why 5,120 Bytes is not enough!

Next you must define what is so bad that you will always forcibly remediate or destroy it. This wanton destruction throws out the rules you normally follow about application context or business use cases – if your SOAR platform sees it then it should act immediately and send a Slack message (if you are so inclined) later, not beforehand. Essentially this boils down to a policy of “shoot first, ask questions later” or “take the guns, leave the cannoli’s” depending on your preferred movie subtext.

However, this scorched earth tactic while cool in the movies, is not as simple as it sounds. Unless you do not have separations of duties AND you have the most trusted security team ever you will need to sell this scorched earth policy to your technical and business leaders (P&L heads, CTOs, Heads of Architecture, etc.).

This “sale” should manifest itself in the way of educational materials, clearly written technical guidelines, and demonstrating how you are treating relevant risks associated with the lack of a particular control that your SOAR platform will be enforcing. It is a common courtesy to send messages or emails when you take these actions too, as teams may not know despite educational materials being out there, probably just because they forgot to update a Terraform Module somewhere.

As a bonus, introduce them to the MontyCloud Day2™ Platform which has dozens of “golden” ready to be deployed templates and blueprints that has many of these configurations taken care off. Once deployed, DAY2™ also continuously scans known bad configurations and giving you “what right looks like.” DAY2™ can also respond to known bad configurations, integrate into instant messaging and issue management tools, secure your host-based access, and drive home that application context you so desperately need.

Besides just monitoring actions taken by your SOAR platform for the sake of sending warning messages, collecting metrics is a great way to show value of your security program., Measure its success, and measure the return on investment of your tooling choice. For instance, if you have good data on how long it takes teams to track down and remediate an issue, you can use that data and blended labor costs to show cost and time savings over a period relative to how fast your SOAR platform operates. You can use metrics to measure the efficacy or a DevSecOps program rollout or an educational campaign you launched for a particularly bad issue. Finally, metrics are useful for “sticker shock” of remediations-over-time to justify onboarding additional resources (e.g., IAM Experts) or tooling (e.g., SAST, CI tools, “enterprise” Infrastructure-as-Code platforms). In the event you cannot get initial buy-in for this more “scorched earth SOAR”, metrics can get help from the business case of immediately remediating and not after you have been left on read in Slack for 48 hours.

By this point you are preventing really bad things from happening, immediately righting any bad wrongs in all environments, and are collecting metrics to show off just how awesome your team is – you are almost at the finish line! In the Excel spreadsheet that you probably used to sort all your CSPM findings and “naughty” actions, you may have a few dozen rows filled with findings that are still bad, right? The reality is that you will implement a graduated response based on severity of risk. And you will need to sell that too. Infrastructure owners and application owners can be pesky.

Getting buy-in and having application context at the ready is key to quickly interdict responses to bad actions that did not quite meet that bar for prevention or immediate response. For things that are bad, that can be remediated, you should still go after them but instead of remediation you can send messages to teams instead. For example, this is a good exercise when attempting to enforce encryption or Account-level settings such as S3 Public Access Blocks or EBS Encryption. If you went around enabling encryption onto every SNS Topic, SQS Queue, Kinesis Data Stream, or Kinesis Firehose Delivery Stream you will likely break a service due to permissions issues around encryption. Additionally, using Account-level EBS Encryption will use a default AWS-managed key if you do not specify a KMS CMK, and if a team wants to share snapshots or AMIs across Regions or Accounts they suddenly cannot do that due to the managed key being unique to per Account per Region and unable to be controlled by the user.

This method of automated outreach serves as a great educational tool, but like anything, should be communicated about and measured beforehand. Using your CSPM tool, you can find the “clusters” where there are an outsized number of findings compared to others, whether it is an application group or a specific Account or Business Unit – those people will appreciate a heads-up they will get several 100 Slack messages and emails from your tool to give them a chance to clean up. While it is not the intended purpose of SOAR, sending instructions on how to fix something and the reason why may be a net-benefit in the long term as it encourages development teams to learn. This technique can be used for a “Crawl-Walk-Run” style of rollout for a DevSecOps program where you prompt teams to fix things, and steadily reduce their agency and enforce adoption of mandated tools and processes. All that said, you do not need to wait for these items to be fixed by the team, at the end of the day a message is a courtesy, and you should set time periods per environment (Production, UAT, Staging, etc.) in which your SOAR platform will fire away.

Keeping the idea of application context in mind you still need to account for use cases where a bad configuration may make sense for a specific application or business unit, maybe there is a S3 bucket that holds static assets and for some reason they do not want to allow the use of CloudFront or the business “accepted the risk” (hopefully with a quantitative risk management approach). Most modern SOAR platforms and similar homebrew solutions will account for specific canonical exemptions – maybe you look up a specific Tag or track the unique ID or Amazon Resource Name (ARN) of the resource. If you go the Tag method, ensure that it is something not easy to guess and that you monitor these exemptions with metrics or a Governance/Risk/Compliance (GRC) tool or everyone will be tempted to just slap the tag onto their “sandbox”. Any exceptions should be reviewed in accordance with any policies you have and account for those exempted resources’ lifecycle, such as when they are terminated or no longer required.

To close, let’s quickly recap our “Smart SOAR” tenets of sorts which can be used as a quick checklist for your current progress or a roadmap for future greatness.

If you do not want to drown in security events and tickets, it is important to implement automated rules-based governance downstream as close to the application owners as possible. While CSPM tools can help you monitor and flag vulnerabilities, SOAR can help automate actions and remediations.

MontyCloud DAY2™ has taken a cloud native approach and combined CSPM and SOAR. You do not have to install any third-party agents. Getting started is as easy as connecting your account(s). There is no way around implementing CPSM and SOAR tools if you want to scale. Pick one and get started today!

Have you signed an agreement to begin migrating to AWS? Or are you a Managed Service Provider (MSP) with an AWS Migration Competency delivering AWS...

The Imperative of Infrastructure as Code (IaC) Just as blueprints are vital for constructing resilient physical infrastructure, Infrastructure as...

Today I am super excited to announce the availability of MontyCloud’s CoPilot for Cloud Operations, an interactive Agent for simplifying Cloud...